20184

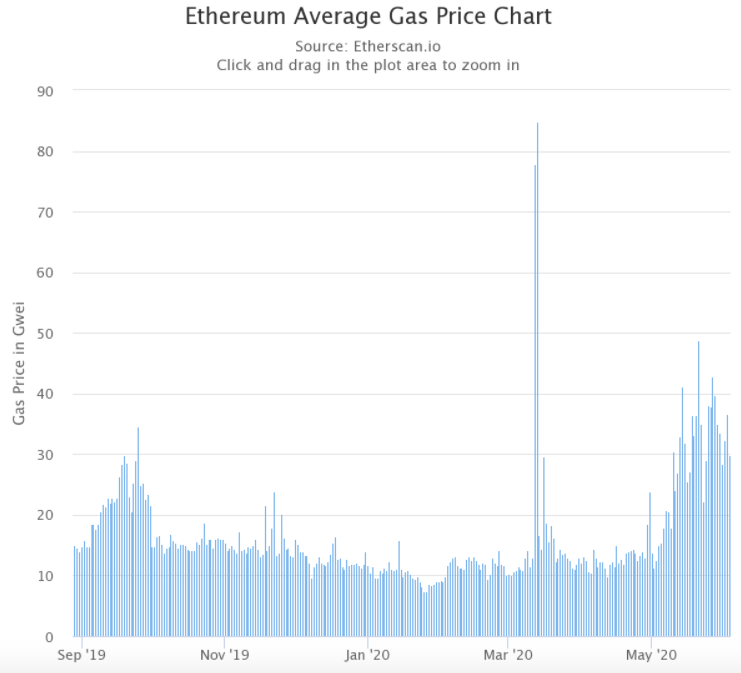

Author | Danny Ryan Translator | Nuka-Cola Planning | Chu Xingjuan Since the end of April, the Ethereum network has become extremely congested. Data shows that the average gas price on the Ethereum network has more than tripled since the beginning of May, rising by an average of 30 Gwei over the past few days. The result, EthGasStation says, is that sending a simple ETH transaction costs an average of $0.16, which is the price using as little gas as possible. The cost of ERC-20 token transfers and smart contract calls can be several times that amount.  The increase in fees has already had a significant impact on gaming DApps. DappRadar data shows that in May, the activity of gaming DApps dropped significantly, while other types of DApps increased slightly. In the final analysis, the reason for the congestion of the Ethereum network is that the current network cannot support the increasing transaction volume. Eth 2.0 is a scalable proof-of-stake infrastructure. In the upcoming upgrade, the blockchain protocol will shift from PoW to PoS consensus mechanism, introducing improvements in scalability, security and performance. What is the current progress of Eth 2.0? Ethereum 2.0 coordinator Danny Ryan recently published an article detailing the latest developments and various plans of Eth 2.0. Eth 2.0 status quo The team is currently working hard to launch Phase 1, which means a lot of consensus is about to be reached. It is expected that 64 shards will be initially launched and the total available data on the system will be approximately 1 to 4 MB per second. Note: The launch of Eth 2.0 is divided into 4 phases: Phase 0, Phase 1, Phase 1.5 and Phase 2. The goal of Phase 0 is to reach consensus with thousands of nodes and hundreds of thousands of consensus entities (validators) spread across the world. Ensuring that the blockchain has the ability to handle a large number of verification procedures is the difficulty at this stage. Other non-sharded proof-of-stake mechanisms often only contain 100 or 1,000 validators, while Eth 2.0 needs to contain at least about 1,600 validators, and this number is expected to grow to hundreds of thousands within two years. Stage 1 means that a large amount of consensus is about to be reached. The "things" that reach consensus will appear in the form of shard chains, and the validators from the beacon chain will be given random short-term tasks to build and verify each shard chain, and make crypto-economic commitments to the status, availability, and effectiveness of each chain, and finally return the results to the core system. Phase 1.5 is to integrate the Ethereum mainnet as a shard (one of the many shards created in Phase 1) into the new Eth2.0 consensus mechanism. But unlike the traditional Ethereum mining algorithm, this time the construction work is completed by the Eth2.0 validator. The hot swap of this consensus mechanism will maintain a high degree of transparency, and applications will still maintain their original running status. Phase 2 will further add state and execution. Specific operations can take many forms. In the current research and prototyping work, the team's main job is to figure out which form is better and the details behind this choice. Eth 2.0 client and testnet status Over the past two years in Phase 0, Eth 2.0 clients have evolved into extremely sophisticated software solutions capable of handling distributed consensus among tens of thousands of validators on thousands of nodes. It has now entered the test network stage and is moving step by step towards full launch. Danny Ryan encourages everyone to actively experience multi-client, but there needs to be a balance between stability and exploration. In addition, there is an anti-correlated incentive mechanism built into the protocol. In the extreme case, if a major client inadvertently takes validators offline or performs some serious actions that are exploited by user validators, developers will be subject to very severe penalties - much more severe than independent negative actions. In other words, under such a system, running fewer but more sophisticated clients is the best choice, because the increase in clients will only increase the probability of errors. It should be emphasized that - if there are multiple clients that are secure and suitable for your needs, it is the user's obligation to actively select a few of them to promote a healthy distribution of different clients on the network. Testnet status Currently, a small public development network is running on the Ethereum network, with restarts occurring approximately every one to two weeks. This "development network" is mainly responsible for bug handling and system optimization of customer team developers. The development network is completely public, but it is not as mature as Goerli or RInkeby. The latest Witti testnet release, led by Afri Schoedon, currently runs version 0.11 of the specification (if you plan to run a node, click here to view the documentation). The client team is actively upgrading to version 0.12 of the specification, which integrates the latest version of the IETF BLS standard. Based on this, the Ethereum team will continue to expand the network scale, increase the load level of the client, and eventually fully transition the development network to version 0.12. After successfully launching 2 to 3 clients and running some high-intensity loads on the version 0.12 network, the team will open a more open test network where developers can run most nodes and verification programs. The goal of the testnet is to create a long-lasting multi-client test environment that simulates the operating conditions of the mainnet as closely as possible (where users can reliably exercise how nodes operate and test everything they wish to test). The ideal approach would of course be to launch the testnet only once and triage any faults during subsequent network maintenance. However, depending on the actual failure situation and severity, the team may need to start multiple times to complete the full launch of the test network. In addition to the ordinary testnet, the team will also provide a more incentivized "attack net" where client teams can run stable testnets and invite more participants to carry out destructive attacks in different ways. Once the attack is successful, everyone will be rewarded with Ether coins. The current state of Eth 2.0 tools Although the tool system of Eth 2.0 is still in its infancy, it has already brought many exciting results. Tool contributions come primarily from client code bases and client teams, but other sources of contribution are also flexing their muscles. In order to better interact with Eth 2.0, understand, protect and enhance Eth 2.0 projects, it is necessary for the entire community to establish and expand a larger Eth 2.0 ecosystem. Eth 2.0 tools represent unprecedented business opportunities. Everyone can discover value here and achieve real success. The following are the directions currently under development, and more work is also in progress:

Here are some creative examples of open tools:

In current Ethereum clients (such as geth, etc.), almost all the complexity is reflected in the processing of user-level activities - including transaction pools, block creation, virtual machine calculations, and state storage/retrieval, etc. The real core consensus in the protocol (proof of work) is actually quite simple. Much of the complexity is handled by sophisticated hardware outside of the core protocol. Eth 2.0 clients, on the other hand, have full consensus. In Proof of Stake and sharding, most of the complexity is built into the protocol to achieve the scalable goal of consensus. This difference in focus makes eth1 and Eth 2.0 clients a perfect match. Currently, members of the geth (EF) and TXRX (ConsenSys) teams are merging the two. The specific contents of this work include:

The path to normal execution across multiple shards has always been a widely debated technical problem. In this regard, the team needs to answer many practical questions, including:

The eWASM (EF) and Quilt (ConsenSys) teams are investing significant research resources in these areas. It turns out that there are many possible solutions, and the number one challenge now is to find simpler, more pragmatic solutions that can be quickly tested, prototyped and discussed. This is where eWASM's Eth1 x64 project was born. The practical method of introducing abstract cross-shard thinking into specific specifications, conducting discussions and constructing design solutions accordingly, helped the team make rapid progress in exploration. DApp developers will need to pay close attention to this trend in the coming months. The relationship between stateless Ethereum and Eth 2.0 Another major research effort advancing in parallel with Eth 2.0 is "Stateless Ethereum". The core of stateless Ethereum is to solve the problem of continuous growth of state scale. With its help, participants can complete block verification without storing the complete blockchain state locally. Now, there is a new implicit input in Ethereum’s state transition function: the overall state. After using stateless Ethereum, the necessary state proof (witness) will be included inside the block, thereby ensuring that the block can be converted/verified as a pure function. For users, this means that Ethereum will become an interlocking but independent world, and we only need to pay attention to some of the states that need attention. Some network participants may store all state (e.g. block generators, block explorers, pay-as-you-go state providers, etc.), but the vast majority only need to know a portion of the overall state. For Eth 2.0, this will be an important technical mechanism to ensure that nodes and validators successfully verify and protect the overall protocol without having to store complete user state on each shard. Instead, validators may choose to plug into certain shard block generators, while baseline validators may simply validate stateless blocks. Stateless Ethereum will become an important addition to the development vision of Eth 2.0, responsible for ensuring the lightweight advantages of this sharding protocol. Of course, if it turns out that the stateless development route is ultimately not feasible, the team has also prepared some other alternative options. Challenges of Eth 2.0 In the current work of Eth 2.0, the main challenge is the introduction of too many validators, shards and clients. The key to the sharding mechanism is that consensus participants (i.e. validators) must join the committee in a randomly sampled form and validate specific parts of the protocol (e.g. shards). If enough validators are included in a specific protocol, then even if the attacker controls the highest number of participants (e.g. 1/3 of all validators), it is still mathematically impossible for the latter to control the committee and disrupt the entire system (the probability of success is generally around 1 / 2^40). In order to achieve this goal, the team needs to design the system to ensure that users can use consumer-grade computing devices (such as laptops or even old mobile phones) to act as validators (each validator will be assigned to each sub-part of the system, and the computing resources of a single device are sufficient to complete the verification of this sub-part). It is this design idea that makes the sharding mechanism both powerful and difficult to implement. First, we must have enough validators to ensure that random sampling is not controlled by malicious actors. In other words, eth2 naturally has more potential validators than most other proof-of-stake protocols, which will bring challenges to the system at various stages in the process - including consensus mechanism research and specification development, network, resource consumption, client optimization, etc. Each new validator will bring system load in various steps of the system. These influencing factors obviously need to be taken into account. In addition to having too many validators, another fundamental decision also makes building more difficult. In Ethereum, the team hopes to increase scalability while minimizing the impact on decentralization principles. Based on this concept, the team must establish a sharding consensus mechanism to split the system into smaller verifiable blocks. Designing and implementing such a consensus mechanism would be extremely difficult. Emphasizing its own protocol attributes is one of the core purposes of Ethereum. Ethereum represents the abstract set of rules that make up the protocol, rather than any specific implementation of those rulesets. For this reason, the Ethereum community has encouraged users to develop various client implementation solutions since its establishment. On today's Ethereum mainnet, you can see besu, ethereumJS, geth, nethermind, nimbus, open-ethereum, trinity and even turbo-geth, etc. In eth2, there are cortex, lighthouse, lodestar, nimbus, prysm, teku and trinity. The multi-client paradigm has several important advantages:

However, too many clients will also bring the following negative effects:

Reference reading: https://blog.ethereum.org/2020/06/02/the-state-of-eth2-june-2020/ InfoQ reader communication group is online! Dear friends, you can scan the QR code below, add the InfoQ assistant, and reply with the keyword "join the group" to apply for joining the group. You can speak freely with InfoQ readers, have close contact with editors, value-for-money technology gift packages are waiting for you, and value-for-money activities are waiting for you to participate. Come and join us!   Click to see less bugs 👇 |